Exploring Face Detection process, and usages in AR

Computer face detection is a process by which the computer locates the faces present in an image or video. This process is not as simple as you might think. Currently, there are different methods to perform face detection by computer.

The face detection algorithm is based on a function that searches for rectangular regions within an image, regions that contain objects that with a high probability resemble others in a training set, returning the rectangular region of the image where they have been found. The function scans the image several times and with different scales to find similar objects but of different sizes.

Facial recognition is over 50 years old. A research team led by Woodrow W Bledsoe conducted experiments between 1964 and 1966 to see if “programming computers” could recognize human faces. The team used a rudimentary scanner to map the location of the person’s hairline, eyes, and nose. The computer’s task was to find matches.

It was unsuccessful. Bledsoe said: “The problem of facial recognition is made difficult by the great variability in rotation and tilt of the head, intensity and angle of illumination, facial expression, aging, etc.”

In fact, computers have a harder time recognizing faces than it is to beat grandmasters at chess. It would be many years before these problems were overcome.

Thanks to improvements in camera technology, mapping processes, machine learning, and processing speeds, facial recognition has reached maturity.

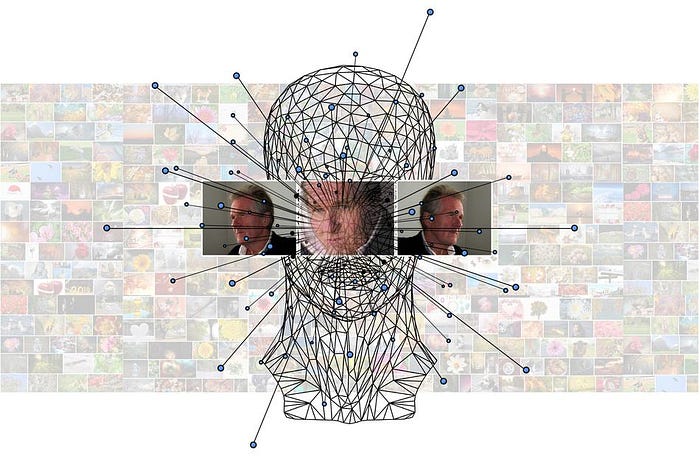

Most systems use 2D camera technology, which creates a flat image of a face and maps the ‘nodal points’ (size/shape of the eyes, nose, cheekbones, etc.). The system then calculates the relative position of the nodes and converts the data into a numeric code. The recognition algorithms search a stored database of faces to find a match.

Facial recognition vs. face detection: a major difference

Although “facial recognition” is generally used as a general term, it is not entirely accurate. There is a key distinction between facial recognition and face detection.

Facial detection is the process of finding human faces in an image, without actually identifying people:

Face detection occurs when a system simply tries to establish that a face is present. Social media companies use face detection to filter and organize images in large photo catalogs, for example.

Instead, facial recognition is going one step further: we not only look for faces, but we also try to verify the identity of the people who appear in the image.

Facial recognition describes the process of scanning a face and comparing it with the same person in a database. This is the approach used to unlock phones or authenticate a person entering a building.

The tools used to train the two systems are different. Desired levels of precision also vary. Clearly, facial recognition used for identification purposes must score higher than any system used solely to organize images.

The confusion between the two processes has caused some controversy. In 2019, a researcher revealed that Amazon’s systems were far better at classifying the gender of light-skinned men than dark-skinned women. That led to fears that surveillance systems could yield more false matches for some ethnic groups. However, Amazon responded that the error rates were related to face detection, which is not used to identify individuals.

Viola & Jones to detect faces

Viola & Jones is an algorithm to detect faces, which since its publication in 2001 is probably the algorithm par excellence to do this type of task. This algorithm is designed to detect specific objects, be it faces, cars, cats, bananas…

In order to detect objects, you need a classifier. We can imagine classifiers as a kind of “template” to detect a certain type of object. This classifier is trained by providing hundreds of example images of the object to be detected.

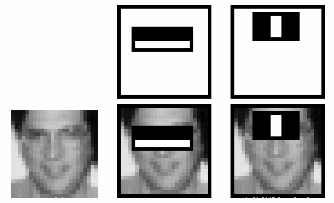

The classifier looks for visual characteristics in the sample images. The characteristics that are used are the so-called “Haar-Like Features”, which have this form:

When training the classifier, combinations of these characteristics are sought in the example images and the most significant ones are chosen to form part of the classifier.

When you want to detect objects, the algorithm applies a series of classifiers (a “cascade of classifiers”) on a region of interest in which it considers that the object in question could be found. If all the classifiers are positive, the algorithm considers that there is a match and the desired object has been found. In our case, a face.

2D technology

2D technology works well in stable and well-lit conditions, as is the case in passport control. However, it is less effective in darker spaces and may not deliver good results when subjects are moving. It is easy to fool with a photograph.

One way to overcome those flaws is through proof-of-life detection. Those systems will look for indicators of a non-live image, such as inconsistent foreground and background features. They can ask the user to blink or move. They are necessary to defeat criminals who try to fool facial recognition systems by using photographs or masks.

Another key advance is the ‘deep convolutional neural network’. It is a type of learning machine in which a model finds patterns in the image data. It displays a network of artificial neurons that mimics the functioning of the human brain. In effect, the network behaves like a black box. You are given input values whose results are not yet known. It then performs checks to ensure that the network is producing the expected result. When this is not the case, the system makes adjustments until it is configured correctly and can consistently produce the expected results.

3D technology

3D facial recognition software is much more advanced, using depth-sensing cameras, the facial imprint can include the contours and curve of the face, as well as the depth of the eyes and distances from points of view. reference as the tip of your nose.

Today, previously advanced processes are reaching mass-market devices. For example, Apple uses 3D camera technology for the Face ID infrared-based thermal function on its iPhone X. IR thermal images map face patterns derived primarily from the pattern of superficial blood vessels under the skin.

Apple also sends the captured face pattern to a “secure enclave” on the device. That ensures that the authentication is done locally and that Apple cannot access the patterns.

Analysis of skin texture

It is the most recent technological development and differs from the others because it works on a much smaller scale. Instead of measuring the distance between your nose and your eyes, measure the distance between your pores. Then convert those numbers into a mathematical code. This code is known as the “skin print.” In theory, this method could be so precise that it can distinguish between twins.

Measurements and precision

Facial recognition systems are evaluated using three criteria.

1. False-positive (also known as false acceptance)

Describes when a system mistakenly makes an incorrect match. The number should be as low as possible.

2. False-negative (also known as false rejection)

With a false positive, a genuine user does not match their profile. This number should also be low.

3. True positive

Describes when a registered user correctly matches their profile. This number must be high.

These three measures are presented in percentages. So, let’s say an entry system tests 1000 people per day. If five unapproved people are allowed in, the false positive rate is five in 1000. That’s one in 200 or 0.5%.

So what percentages do current systems reach? The National Institute of Standards and Technology (NIST) regularly tests multiple systems to search a database of 26.6 million photos.

In its 2018 test, it found that only 0.2% of searches did not match the correct image, compared to a 4% failure rate in 2014. That’s a 20-fold improvement in four years.

NIST computer scientist Patrick Grother says: “The precision gains come from the integration, or complete replacement, of previous approaches with those based on deep convolutional neural networks. As such, facial recognition has undergone an industrial revolution.”

The new confirmation of the technology improvement came from the Department of Homeland Security’s Biometric Technology Rally in 2018. In its test, Gemalto’s Live Facial Identification System (LFIS) obtained an acquisition rate of 99.44%. in less than five seconds, compared to the 65% average.

Uses of face detection in augmented reality

When we talk about AR facial recognition, it is essential to understand that we refer to the software that recognizes the presence of the human face in the device’s camera, which generates a 3D facial mesh and superimposes different scenarios or augmented reality experiences and which can also produce different modifications in real-time.

As mentioned before, facial recognition can verify a person’s identity and give access to their cell phone, but AR face recognition aims to create an emphatic camera that “understands” the human face, being able to detect facial features and map augmented reality features on an individual’s face, below are just some uses for face detection in augmented reality:

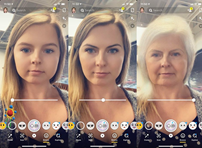

Snapchat-like face filters touch-up filters and masks

Retail

Virtual makeover

A new kind of social network

In a nutshell, your face becomes your social profile. Scan your face and add the personal information you like — a Tweet, a video, a song you particularly like.

Anyone with the Halos app installed on their smartphone can scan your face in the crowd (given you also have the application installed) and will instantly see the info bubbles around you. It looks like something with endless social possibilities. (*Extracted from https://jasoren.com/commercial-use-cases-of-ar-face-recognition-and-facial-tracking-apps/)